|

Your free ticket to P99 CONF is waiting — 60+ engineering talks on all things performance (Sponsored)

P99 CONF is the technical conference for anyone who obsesses over high-performance, low-latency applications. Leading engineers from today’s most impressive gamechangers will be sharing 60+ talks on topics like Rust, Go, Zig, distributed data systems, Kubernetes, and AI/ML.

Sign up to get 30-day access to the complete O’Reilly library & learning platform, free books, and a chance to win 1 of 500 free swag packs!

Join 30K of your peers for an unprecedented opportunity to learn from experts like Chip Huyen (author of the O’Reilly AI Engineering book), Alexey Milovidov (Clickhouse creator/CTO) & Andy Pavlo (CMU professor) and more – for free, from anywhere.

Disclaimer: The details in this post have been derived from the details shared online by the Salesforce Engineering Team. All credit for the technical details goes to the Salesforce Engineering Team. The links to the original articles and sources are present in the references section at the end of the post. We’ve attempted to analyze the details and provide our input about them. If you find any inaccuracies or omissions, please leave a comment, and we will do our best to fix them.

Modern software systems run on millions of automated tests every single day. At Salesforce, this testing ecosystem operates at an enormous scale.

The company runs about 6 million tests daily, covering more than 78 billion possible test combinations. Every month, these tests generate around 150,000 failures, and there are more than 27,000 code changelists submitted each day.

Before automation, dealing with these failures was a slow and tiring process. Developers had to spend hours going through error logs, changelists, and internal tracking systems like GUS to figure out what went wrong. Integration failures were especially difficult because any of the 30,000 engineers across the company could be responsible for a given issue. This made it hard to find the root cause and fix the problem quickly.

The result was a growing backlog of unresolved failures, increasing developer frustration, and long delays. On average, it took about seven days to resolve a single test failure. The Salesforce engineering team recognized that this was not sustainable. They needed a faster, more reliable way to handle failures and keep the development process moving smoothly. This challenge set the stage for building an AI-powered solution to remove these bottlenecks.

In this article, we will look at how Salesforce developed such a system and the key takeaways from their journey.

Ship code that breaks less: Sentry AI Code Review (Sponsored)

Catch issues before they merge. Sentry’s AI Code Review inspects pull requests using real error and performance signals from your codebase. It surfaces high-impact bugs, explains root causes, and generates targeted unit tests in separate branches. Currently supports GitHub and GitHub Enterprise. Free while in open beta.

The Goal of the System

Salesforce has a dedicated Platform Quality Engineering team that plays a critical role in the software development process.

This team acts as the final line of defense before any code is released to customers. While individual scrum teams focus on testing their own products in isolation, the Platform Quality Engineering team goes a step further. They run integration tests across multiple products to make sure everything works well together as one unified system.

This focus on integration is important because customers often use several Salesforce products in combination. A product might work perfectly on its own, but when used together with others, unexpected problems can appear. Customers sometimes describe this as the products feeling like they come from different companies. The Platform Quality Engineering team exists to catch these integration bugs early, before they ever reach customers. Fixing bugs after deployment is expensive and time-consuming, so identifying them early is a major priority for Salesforce.

Beyond this core testing role, the team is always looking for ways to automate engineering workflows and make developers more productive. One of the most time-consuming parts of their work was triaging large numbers of test failures.

To address this, the Salesforce engineering team set a clear goal:

Reduce the amount of manual time engineers spend diagnosing failures

Give developers clear, context-aware recommendations that help them fix issues quickly

Build trust in AI tools by avoiding vague or incorrect suggestions that could waste time

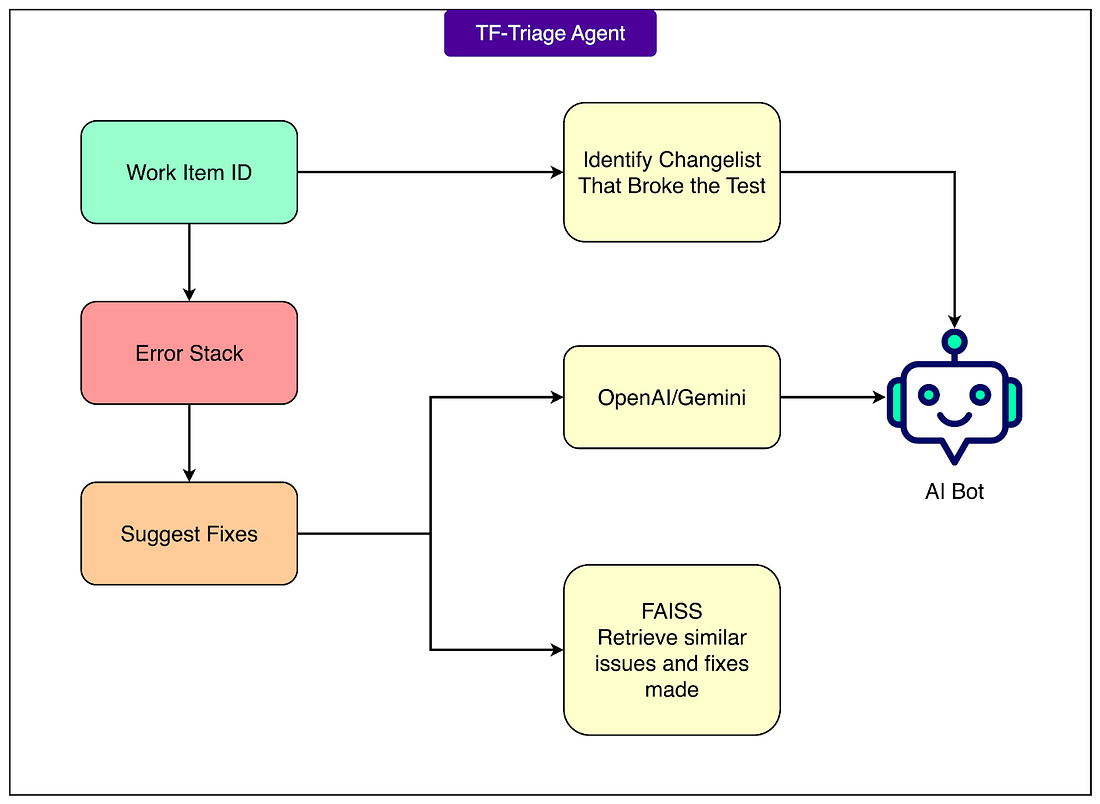

To meet these goals, Salesforce built the Test Failure (TF) Triage Agent, an AI-powered system that provides concrete recommendations within seconds of a failure occurring.

The TF Triage Agent is designed to transform what used to be a slow, manual triage process into a fast and reliable automated workflow. This system fits directly into the team’s mission of maintaining high product quality at scale while keeping the development process efficient.

AI and Automation Architecture

To build an AI-powered system that could process millions of test results quickly and accurately, the Salesforce engineering team designed a specialized AI and automation architecture.

This architecture had to work with massive amounts of noisy, unstructured error data while keeping response times under 30 seconds. Achieving this required a combination of intelligent data processing, search techniques, and careful system design.

Here are the main technical components of the architecture: