|

The right data. The right time.(Sponsored)

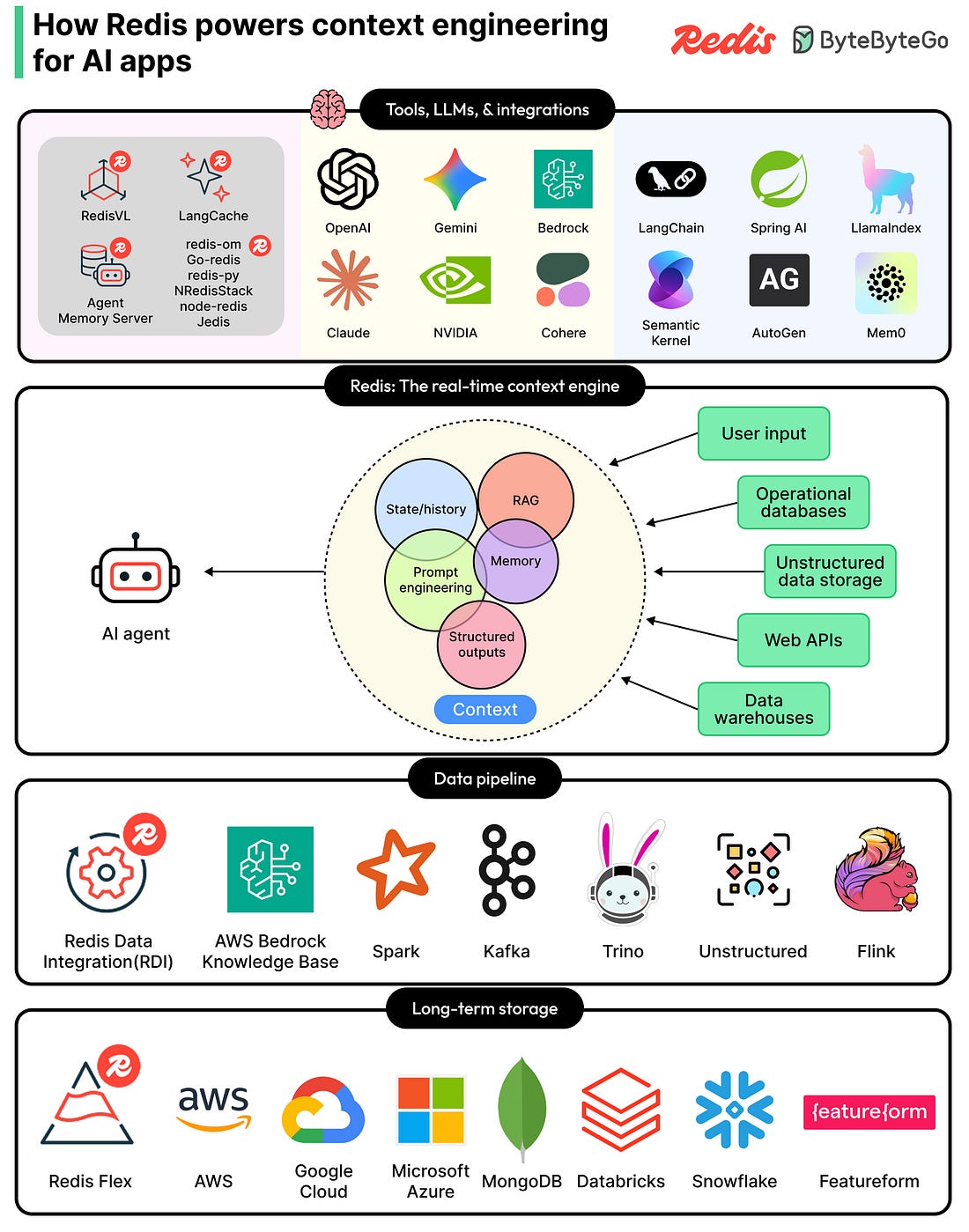

Context engineering is the new critical layer in every production AI app, and Redis is the real-time context engine powering it. Redis gathers, syncs, and serves the right mix of memory, knowledge, tools, and state for each model call, all from one unified platform. Search across RAG, short- and long-term memory, and structured and unstructured data without stitching together a fragile multi-tool stack. With 30+ agent framework integrations across OpenAI, LangChain, Bedrock, NVIDIA NIM, and more, Redis fits the stack your teams are already building on. Accurate, reliable AI apps that scale. Built on one platform.

Every time we open X (formerly Twitter) and scroll through the “For You” tab, a recommendation system is deciding which posts to show and in what order. This recommendation system works in real-time.

In the world of social media, this is a big deal because any latency issues can cause user dissatisfaction.

Until now, the internal workings of this recommendation system were more or less a mystery. However, recently, the xAI engineering team open-sourced the algorithm that powers this feed, publishing it on GitHub under an Apache-2.0 license. It reveals a system built on a Grok-based transformer model that has replaced nearly all hand-crafted rules with machine learning.

In this article, we will look at what the algorithm does, how its components fit together, and why the xAI Engineering Team made the design choices they did.

Disclaimer: This post is based on publicly shared details from the xAI Engineering Team. Please comment if you notice any inaccuracies.

The Big Picture

When you request the For You feed in X, the algorithm draws from two separate sources of content:

The first source is called in-network content. These are posts from accounts you already follow. If you follow 200 people, the system looks at what those 200 people have posted recently and considers them as candidates for your feed.

The second source is called out-of-network content. These are posts from accounts you do not follow. The algorithm discovers them by searching across a global pool of posts using a machine learning technique called similarity search. The idea behind this is that if your past behavior suggests you would find a post interesting, that post becomes a candidate even if you have never heard of the author.

Both sets of candidates are then merged into a single list, scored, filtered, and ranked. The top-ranked posts are what you see when you open the app.

The Four Core Components

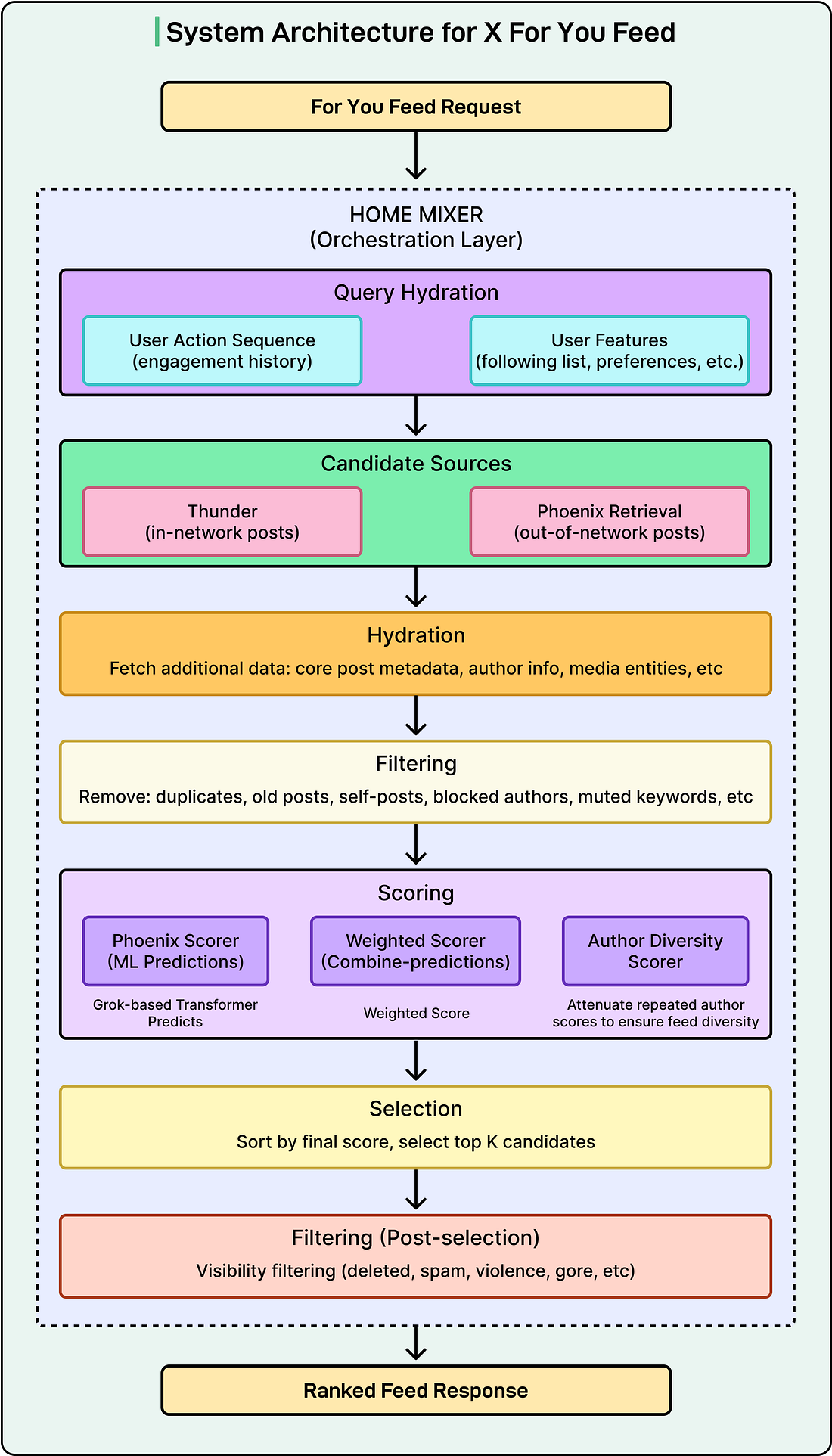

The diagram below shows the overall architecture of the system built by the xAI engineering team:

The codebase is organized into four main directories, each representing a distinct part of the system. The entire codebase is written in Rust (62.9%) and Python (37.1%).

Home Mixer

Home Mixer is the orchestration layer. It acts as the coordinator that calls the other components in the right order and assembles the final feed. It is not doing the heavy ML work itself, but just managing the pipeline.

When a request comes in, Home Mixer kicks off several stages in sequence:

Fetching user context

Retrieving candidate posts

Enriching those posts with metadata

Filtering out the ineligible ones, scoring the survivors

Selecting the top results and running final checks.

The server exposes a gRPC endpoint called ScoredPostsService that returns the ranked list of posts for a given user.

Thunder

Thunder is an in-memory post store and real-time ingestion pipeline. It consumes post creation and deletion events from Kafka and maintains per-user stores for original posts, replies, reposts, and video posts.

When the algorithm needs in-network candidates, it queries Thunder, which can return results in sub-millisecond time because everything lives in memory rather than in an external database. Thunder also automatically removes posts that are older than a configured retention period, keeping the data set fresh.

Phoenix

Phoenix is the ML brain of the system. It has two jobs:

Job 1: Retrieval

Phoenix uses a two-tower model to find out-of-network posts:

One tower (the User Tower) takes your features and engagement history and encodes them into a mathematical representation called an embedding.

The other tower (the Candidate Tower) encodes every post into its own embedding.

Finding relevant posts then becomes a similarity search. The system computes a dot product between your user embedding and each candidate embedding and retrieves the top-K most similar posts. If you are unfamiliar with dot products, the core idea is that two embeddings that “point in the same direction” in a high-dimensional space produce a high score, meaning the post is likely relevant to you.