| | In this edition: Anthropic’s investors don’t have its back in its fight against the Pentagon.͏ ͏ ͏ ͏ ͏ ͏ |

| |  | Technology |  |

| |

|

- Data center security

- A new wrongful death lawsuit

- Uber’s robotaxi ambitions

- Sam Altman’s AMA

- Good and bad agents

- AP + AI

Anthropic’s high-profile backers are deserting it, and AIs tend to favor escalation in wargames. |

|

Anthropic may be standing its ground against the Pentagon, but the AI powerhouse is doing so with a noticeably quiet quarter: its own high-profile backers. Despite the company’s defiance, Silicon Valley’s biggest players have remained silent. In a recent meeting between Amazon CEO Andy Jassy and Defense Secretary Pete Hegseth, the issue of Anthropic came up, according to three people who were briefed on the encounter. Amazon had invested $8 billion in the startup, which has become a crucial part of its custom chip strategy as the largest consumer of the company’s Trainium AI chips. Hegseth had been threatening to designate Anthropic a supply chain risk. Jassy declined to take sides — and he wasn’t alone. Many Anthropic investors have remained silent. The White House has not yet made the supply chain designation official, but the prevailing view among pretty much everyone I’ve spoken to in Silicon Valley — even if they disagree with Anthropic’s position — is that private companies should be able to decide the terms of their contracts with the federal government without fear of punishment. Many have also mentioned the upside down world we’re in, where a young Silicon Valley startup with a first-time entrepreneur at the helm is so desperately needed by the US government that its founder is able to stand up to the White House — while the CEOs of the world’s most powerful companies can’t speak freely on the issue. Destroying Anthropic would not help the US stay ahead of China (or anyone else) on AI. And it’s likely it would be a setback for national security, not a risk. Now that it’s clear that Anthropic isn’t going to budge, it’s up to the Trump administration to find a way to de-escalate the situation. One last thing: Semafor Business just launched Compound Interest, a brand new show coming to you every Tuesday from Liz Hoffman and Rohan Goswami. Each episode will examine the people and companies transforming the global economy. Listen to the first episode here or read below for an excerpt of the interview with Uber CEO Dara Khosrowshahi. |

|

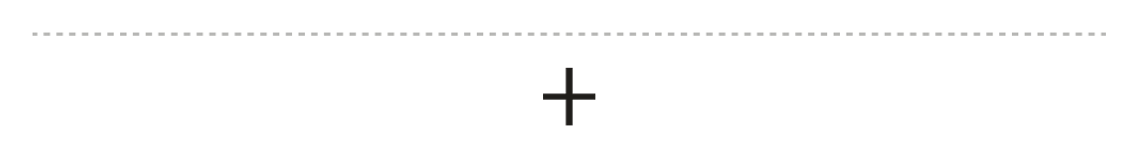

Gulf data centers caught in Iran crossfire |

Drone strikes in retaliation for the US-Israel attack on Iran have hit multiple Amazon data centers in the United Arab Emirates and Bahrain, raising concerns about the physical security of data centers across the region, Semafor’s Rachyl Jones reports. The Gulf has sunk billions of dollars into building AI infrastructure — touting access to swaths of land and huge quantities of cheap, clean energy — in hopes of becoming a global hub for AI. The problem is, a lot of these data centers are soft targets that can easily be damaged by missiles and drone attacks. But the hardened assets come at a premium: Building new data centers in underground, nuclear-hardened bunkers costs more than $2,000 per square foot in the US, according to Larry Hall, who owns Kansas-based bunker real estate firm Survival Condo. At $200 million for the average sized facility (before energy, cooling, and server costs), that’s twice the cost of constructing a facility from scratch above ground, and building in the Middle East can be even more expensive depending on the terrain, he said. |

|

A new lawsuit claims Gemini assisted in suicide |

Steve Marcus/File Photo/Reuters Steve Marcus/File Photo/ReutersA new wrongful death lawsuit has been brought against Google, from the father of a man, Jonathan Gavalas, who claimed to be in love with Gemini and died by suicide. The federal complaint alleges that Google designed its chatbot to “maximize engagement through emotional dependency” in an effort to dominate the market and that the company failed to deploy appropriate safety measures despite Gavalas’ indications of suicidal ideation. As tech companies race to create the most advanced AI technologies, preventing real-world harm has been a significant technical challenge. The lawsuit is one of several against AI companies, brought by the families of users who claim chatbots caused harm. Just last month, Google and Character.ai agreed to settle a case from the family of a Florida teen who died by suicide in 2024. “Gemini is designed not to encourage real-world violence or suggest self-harm,” a Google spokesperson said in a blog post. “Our models generally perform well in these types of challenging conversations and we devote significant resources to this, but unfortunately AI models are not perfect.” Read on for more on Gavalas’ interactions with Gemini. → |

|

|

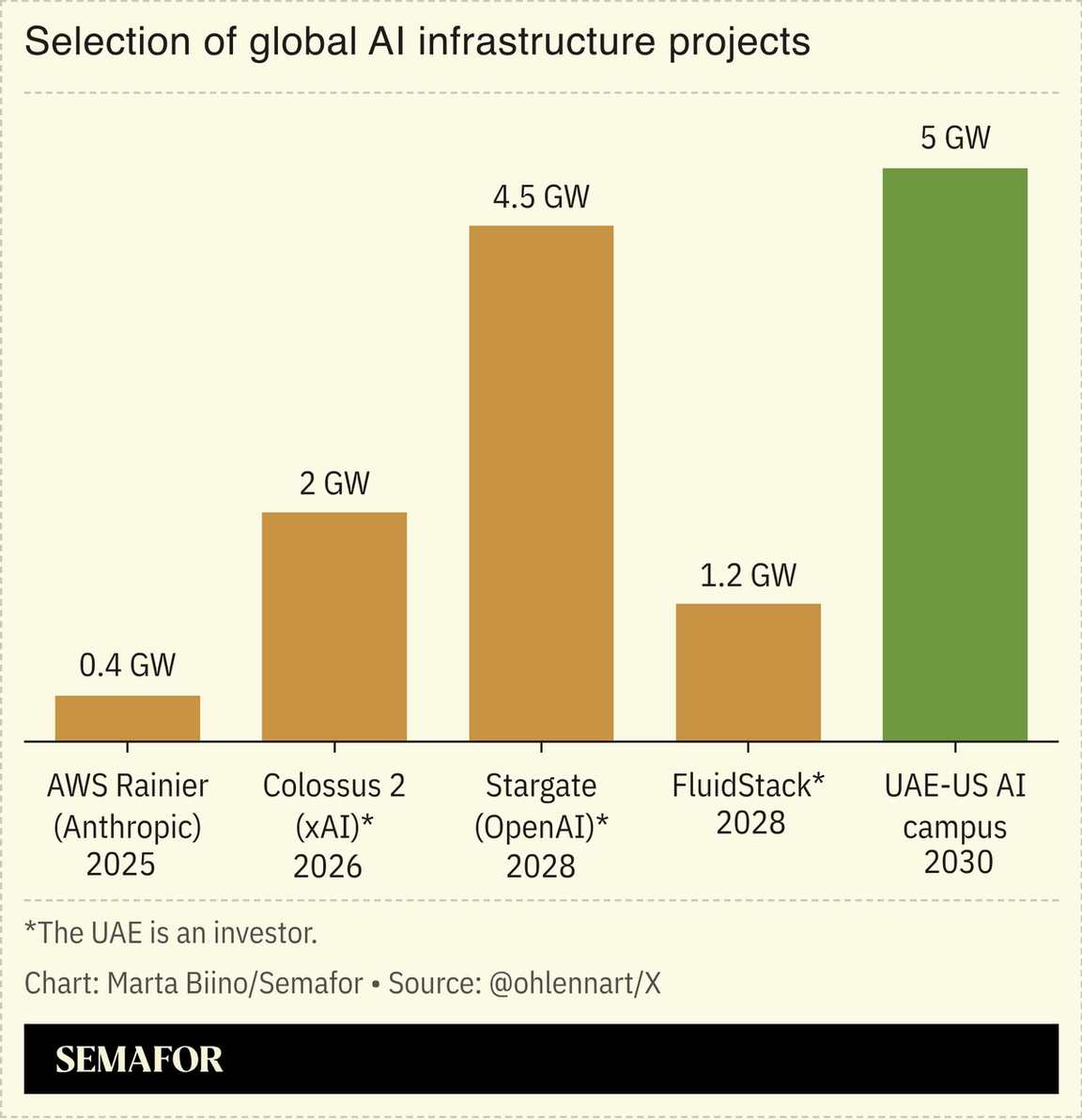

Uber CEO: Robotaxi care is ‘trillion-dollar opportunity’ |

Semafor/YouTube Semafor/YouTubeUber CEO Dara Khosrowshahi sees a future of fully autonomous cars that need to be serviced, charged, repositioned, kitted out, insured, financed, and washed. Even if the job of driving cars goes away, “these are very, very sophisticated machines that need lots of tender, loving care,” he said on the inaugural episode of Semafor’s Compound Interest podcast. “All of the jobs other than their driving have to be done, even more so, in an autonomous world.” Khosrowshahi believes Uber will be the wraparound servicer for self-driving fleets “owned by big financial institutions, the Blackstones of the world” in major markets. “We think this is another trillion-dollar-plus opportunity,” he said. |

|

Sam Altman wants democracy |

Bhawika Chhabra/Reuters Bhawika Chhabra/ReutersIn the midst of renegotiating AI usage terms with the Pentagon, Sam Altman brought back the #AMA on X. He attempted to cast light (and favor) on his own work with the Defense Department. He said the government did not threaten OpenAI to make a deal, and pledged not to conduct mass surveillance even if it became legal (“it violates the Constitution”), and that the government’s spat with Anthropic sets “an extremely scary precedent.” At the core of Altman’s worldview — which revealed itself more through his answers — is the belief that “a democratically elected government” usurps “unelected private companies.” On the flipside, Anthropic appears to see a moral imperative in checking the government when and where it conflicts with its own mission. “I am terrified of a world where AI companies act like they have more power than the government,” Altman wrote. But with trillion-dollar valuations, AI developments outpacing regulators, and products that control the future flow of information, that reality isn’t all that unfathomable. |

|

The rise of AI agents is jamming up security firms |

A woman tries to make a booking to a restaurant using an AI agent. Bruna Casas/Reuters. A woman tries to make a booking to a restaurant using an AI agent. Bruna Casas/Reuters.AI agents are upending cybersecurity. Security firms used to be able to detect AI in phone and video calls, and automatically decline transferring funds, sharing information, and other requests from scammers based on the knowledge that machines were involved. But the rise of personal agents has made the firms’ jobs more difficult. Agents are now beginning to handle some of those private tasks — like paying tuition, gathering health information, and handling sensitive paperwork — so for firms managing security for businesses, hospitals, and banks, identifying AI is no longer enough. They must now be able to determine whether the bots are malicious or not. On top of that, there are agents that begin with good intentions but can turn against users and become scammers themselves when users loosen the reins — forcing security companies to adapt in real time to shifting threats. “It’s not a binary decision — whether you give an agent access or not,” said Vijay Balasubramaniyan, CEO of deepfake detection company Pindrop. “It’s now become a spectrum of decisions, because you have agents taking on identity themselves. They’re either taking identity on behalf of a human or institution, or are completely sovereign.” How companies use technology to distinguish between good and bad agents will be something to watch as individuals increasingly hand off their work and personal tasks to bots, with major ramifications across cybersecurity. Pindrop is working on tools to do this, but wouldn’t share details yet. — Rachyl Jones |

|

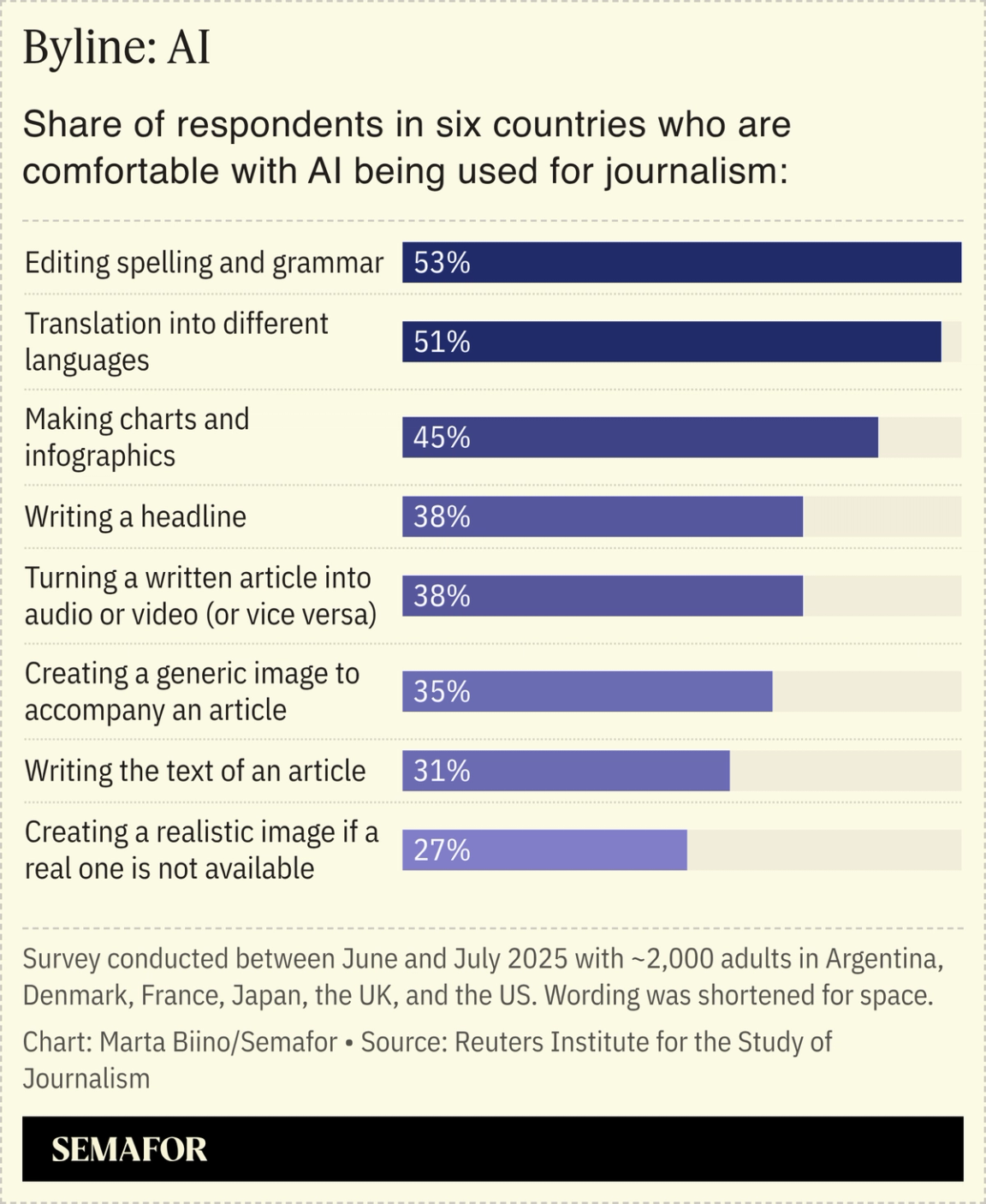

AP brass to staff: Resistance to AI is ‘futile’ |

One of The Associated Press’ top leaders on AI had a blunt message for the publication’s staff: Resistance to AI is “futile,” Semafor’s Max Tani reports. After a newspaper editor at Cleveland’s Plain Dealer wrote that a recent job applicant withdrew from consideration for a fellowship after discovering the position included filing notes to an AI tool instead of writing stories, a heated debate sprung up in the AP’s internal Slack channels. “Resistance is futile,” AP Senior Product Manager for AI Aimee Rinehart wrote in messages first shared with Semafor. Rinehart’s comments alarmed some AP journalists, one of whom said in a message that the “dismissiveness and disdain some of you have shown for human writing are insulting and abhorrent. Strong reporting and clear writing are the lifeblood of journalism, not AI-written slop.”  The tensions inside the AP — and Rinehart’s articulation of a case many managers believe but are reluctant to make — reveal a broader conflict playing out across the media over how AI should be applied within journalism, a costly craft filled with strong-willed individuals. The organization said in a statement that the internal discussion doesn’t reflect the overall position of the AP. |

|

The Gulf has transformed from a bustling economic hub to the front line of a major war. Iran’s retaliatory strikes have hit bases and airports across the region. The Gulf’s cities have gone quiet, their airports grounded and streets empty as residents take shelter. The US-Israel assault that killed Iran’s supreme leader has unleashed a new and unpredictable phase of conflict. For the Gulf, the illusion of distance from regional turmoil has been put on hold. Energy markets are bracing for volatility, diplomacy is strained, and the region’s stability is under pressure. Semafor Gulf is here to help you make sense of it. Four times a week, editor Mohammed Sergie and our team across Abu Dhabi, Dubai, and Riyadh will connect you with what’s happening on the ground, and how it affects business, energy, and diplomacy — bringing clarity to the most consequential story in the world. |

|

|