|

If AI is a weapon, why don't we regulate it like one?

Thoughts on the fight between Anthropic and the Department of War.

If you haven’t heard about the fight between the AI company Anthropic and the U.S. Department of War, you should read about it, because it could be critical for our future — as a nation, but also as a species.

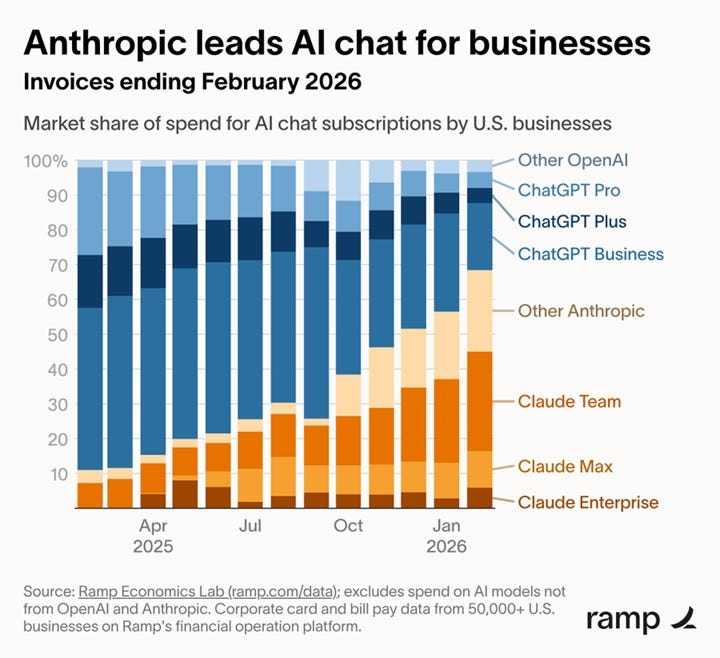

Anthropic, along with OpenAI, is one of the two leading AI model-making companies. OpenAI has very narrowly led the race in terms of most capabilities for most of the past few years, but Anthropic is beginning to win the race in terms of business adoption:

|

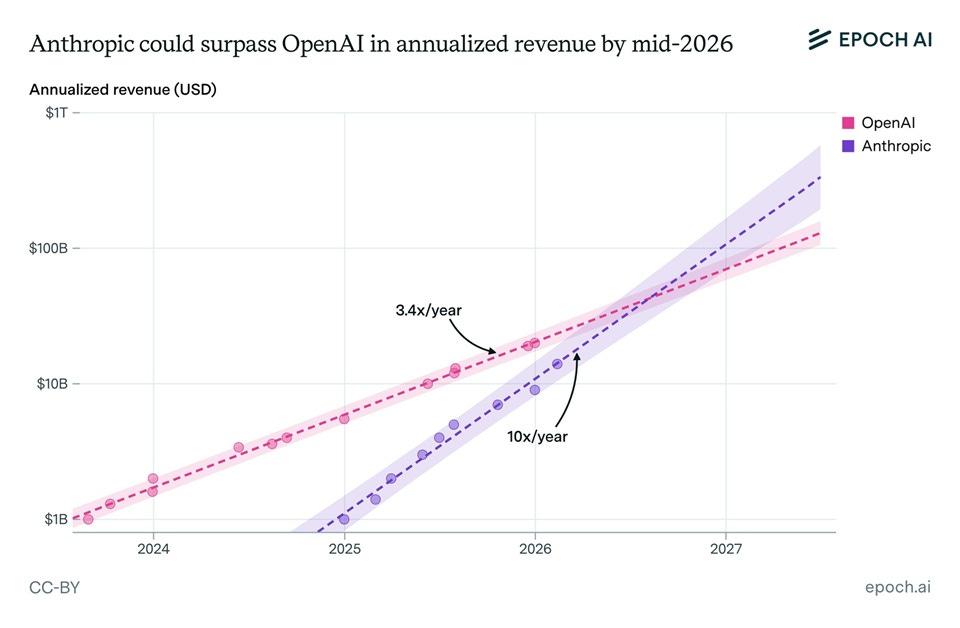

This is because of Anthropic’s different business model. It focused more on AI for coding than on chatbots in general, and also focused on partnering with businesses to help them use AI. This may pay eventual dividends in terms of capabilities, if Anthropic beats OpenAI to the goal of recursive AI self-improvement. And it’s already paying dividends in the form of faster revenue growth:

|

Anthropic had partnered with the Department of War — previously the Department of Defense — since the Biden years. But the company — which is known for its more values-oriented culture — has begun to clash with the Trump Administration in recent months. The administration sees Anthropic as “woke” due to its concern over the morality of things like autonomous drone swarms and AI-based mass surveillance.

The fight boiled over a week ago, when the administration stopped working with Anthropic, switched to working with OpenAI, and designated Anthropic a “supply chain risk”. The supply-chain move was a pretty dire threat — if enforced rigorously, it could cut Anthropic off from working with companies like Nvidia, Microsoft, and Google, which could kill the company outright. But like many Trump administration moves, it appears to have been more of a threat than an all-out attack — Anthropic has now resumed talks with the military, and it seems likely that they’ll come to some sort of agreement in the end.

But bad blood remains. Trump recently boasted that he “fired [Anthropic] like dogs”. Dario Amodei, Anthropic’s CEO, released a memo accusing OpenAI of lying to the public about its dealings with the DoW, said that OpenAI had given Trump “dictator-style praise”, and asserted that Anthropic’s concern was related to the DoW’s desire to use AI for mass surveillance.

What’s actually going on here? The easiest way to look at this is as a standard American partisan food-fight. Anthropic is more left-coded than the other AI companies, and the Trump administration hates anything left-coded. This probably explains most of the general public’s reaction to the dispute — if you ask your liberal friends what they think of the issue, they’ll probably support Anthropic, whereas your conservative friends will tend to support the DoW. Marc Andreessen probably put it best:

(The converse is also true.)

The Trump administration itself may also see this as a culture-war issue, as well as a struggle for control. But, at least in my own judgement, Anthropic itself is unlikely to see it this way. Anthropic itself is not committed to progressive values writ large so much as it’s committed to the idea of AI alignment.

Like almost everyone in the AI model-making industry, Anthropic’s employees believe that they are literally creating a god, and that this god will come into its full existence sooner rather than later. But my experience talking to employees of both companies has suggested that there’s a cultural difference between how the two think about their role in this process. Whereas — generally speaking — OpenAI employees tend to want to create the most capable and powerful god they can, as fast as they can, Anthropic employees tend to focus more on creating a benevolent god.

My intuition, therefore, suggests that Anthropic’s true concern — or at least, one of its major concerns — was that Trump’s Department of War would accidentally inculcate AI with anti-human values, increasing the chances of a future misaligned AGI that would be more likely to see humanity as a threat. In other words, I suspect the issue here was probably more about fear of Skynet,¹ and less about specific Trump policies, than people outside Anthropic realize.

But anyway, beyond both political differences and concerns about misaligned AGI, I think this situation illustrates a fundamental and inevitable conflict between human institutions — the nation-state and the corporation.