|

|

AI Apocalypse Looms. We’re Already Blowing It.

Surely there are more options than letting AI run wild or shutting it down, right? Right?

Several commercial ships are under fire near the Strait of Hormuz as Iran continues to try to block one of the world’s most important shipping lanes. The U.S. military is trying to prevent the Iranian Navy from laying mines in the key shipping channel. The chairman of the Joint Chiefs of Staff reported that the Iranians are already “adapting” as they target American air defenses and hotels frequented by U.S. service personnel.

And it seems like just last month Trump was boasting about low gas prices. Happy Wednesday.

Everybody Hates AI

by Andrew Egger

In the few short years since generative AI arrived on the scene, the technical capabilities of large language models have quickly gone from cool to remarkable to astonishing. As at any disruptive, revolutionary moment, the public-policy challenges are going to be massive. The laws governing AI use are non-existent; the political pressure to come up with some is massive and growing. After a decade-plus of general policy paralysis amid endless referendums on the person of Donald Trump, it’s not hard to imagine a world in which the 2028 election hinges instead on the future of American AI regulation.

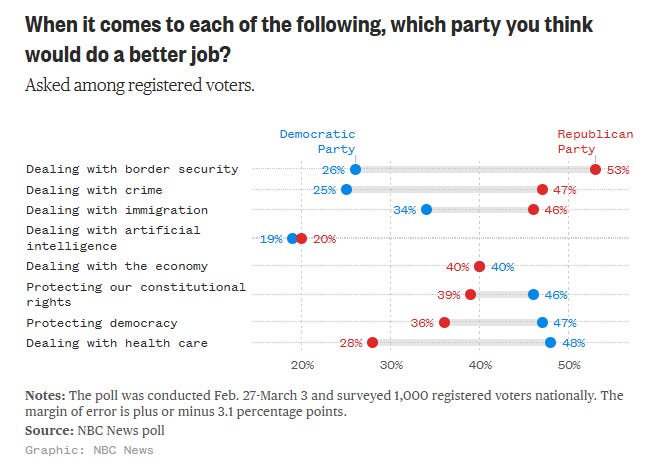

It’s hard to overstate, too, just how wide open the political terrain is. An NBC News poll released this week asked voters which party they most trusted across a number of different policy issues. Most were basically unsurprising: By a wide margin, Republicans were more trusted to deal with border security, immigration, and crime, while Democrats were favored for dealing with health care, protecting democracy, and protecting Americans’ constitutional rights. But when it came to AI, practically nobody had any confidence that either party had the edge. Just look at this graph:

That’s what opportunity looks like. The party that can win the argument over AI is set up for a political windfall—and then will be tasked with shepherding the country through massive disruption toward a prosperous rather than a dystopian AI-integrated future, if possible. No pressure.

But so far it seems like basically everybody is blowing it.

If the question politicians are asking themselves is merely “how can I reap a political benefit from AI policy,” there’s increasingly one answer that is unfortunately straightforward. At the moment, most people hate AI—which isn’t very surprising, since their exposure to its benefits has been much more muted than their exposure to its drawbacks. If you’re not an active AI user today, then AI isn’t much for you but a machine that spits out the deluge of scams and uncanny clickbait that clogs your social feeds, the slop that fills up online product reviews, and the glitchy chatbots you’re forced to dodge around if you want to speak to an actual customer service person—oh, and which you worry may be coming to take your job soon.

So for most populists—left and right—there’s an easy answer to the AI-policy debate: Just quit letting it happen. From Sen. Bernie Sanders to Florida Gov. Ron DeSantis, there’s a growing chorus of voices eager to strangle generative AI in the cradle, typically by banning the construction of the data centers that constitute its brains. “Why would we subsidize something,” DeSantis asked last year, “that could potentially cause problems for folks?” (For more establishment-ish types, there’s of course a different easy way to reap a political benefit: Just become an industry cheerleader and soak in the campaign cash.)

Many other legislative attempts to regulate AI are just as blinkered in their approach. Take just one state-level example. In New York, a bill that just sailed through a Senate committee would forbid AI chatbots from giving “any substantive response, information, or advice” about knowledge related to any field for which the state credentials practitioners—doctors, lawyers, engineers, therapists, social workers. A chatbot can’t replace a doctor or a lawyer, of course—but that doesn’t make it sensible for a legislature to try to wall off entire fields of knowledge out of fears that AI being able to inform you about them could theoretically harm existing professional groups. Ludicrously, the bill would also be enforced by a private right of action—meaning that if you could trick a chatbot into giving you medical or legal advice in New York, you’d be allowed to sue whoever made the chatbot.¹

But if some state attempts to regulate have been ham-handed, so too has been this White House’s approach. The Trump administration has attempted to prevent states from regulating AI altogether, and has in fact treated the very idea of doing anything to direct or restrain AI’s development and deployment as an unacceptable obstacle to winning the AI race against China. Under their accelerationist framework, even AI companies’ own internal controls are an intolerable barrier to speed. This month, Defense Secretary Pete Hegseth declared war on the AI company Anthropic over its refusal to allow its products to be used for mass surveillance of Americans or to control autonomous lethal weapons systems.

And we shouldn’t have much hope that it will be enough for the companies to regulate themselves. There’s too much of a race-to-the-bottom dynamic here, as the Pentagon dustup also demonstrates: Although Anthropic was willing to take an enormous financial hit to its business rather than bend on its redlines, other AI labs were waiting in the wings. Elon Musk’s xAI and Sam Altman’s OpenAI were all too happy to step up in Anthropic’s place on the terms Hegseth desired.²

I could go on and on, but you get the idea. At an existential moment that demands bold thinking and policy innovation, we’re stuck with the same old broken toolset: a paralyzed Congress in which even the simplest compromises, let alone groundbreaking policy work, seems impossible, a whipsawing White House that wants to encourage AI development until the moment it decides to burn an AI company down out of pique. Maybe they’ll surprise us all and rise to the moment. Maybe someone new will show up to fill the void they’re leaving. I’m not holding my breath.

What kind of AI regulation would you want to see? And how much likelier would you be to vote for a politician if they had a smart idea about AI? Share your thoughts.