| | In this edition, Jensen Huang wants more for Nvidia’s future, and a fatal mistake in the targeting o͏ ͏ ͏ ͏ ͏ ͏ |

| |  | Technology |  |

| |

|

- Nvidia restarts H200 production

- Human error in Iran

- Defense tech’s moment

- Using AI to improve AI

- Tech CEOs’ God complex

Jensen Huang wants it all, and a startup is hiring actors and performers to help train LLMs on human tone and emotional expression. |

|

For one of the busiest men on this planet, Nvidia CEO Jensen Huang sure has spent a lot of time talking this week. First, a three-hour speech at his signature conference, followed by a media session in which he revealed the “triangle” mantra sustaining him through the current race for AI dominance: “Don’t get fired, don’t be bored, and don’t die.” In this industry, “if you aren’t running, you’re being eaten,” he said. Moving too cautiously could get you fired — or worse. Huang knows this because he can already feel competition chip away at Nvidia’s stranglehold on AI compute. Sure, Nvidia is so big and powerful — and the overall market is growing so quickly — the company could keep doing the same thing and remain profitable for years. But four hours of Huang’s off-the-cuff comments suggest he is hell-bent on having it all. Even if that means expanding the company’s reach into areas that puts it in direct competition with some of its best customers. Take OpenClaw, the open-source framework that allows anyone to command an army of AI agents, which Huang repeatedly called a “big deal.” As it catches on with consumers and enterprise customers, it will lead to a sharp increase in the consumption of AI tokens, a sizable chunk of which will be generated on Nvidia’s chips. But OpenClaw also creates a fresh, new playing field where competitors could try to gain market share over Nvidia, which is not content to just enjoy a growing pie. On Monday, it announced its own platform for enabling OpenClaw in the enterprise (NemoClaw) and a collaboration between open-model builders to develop specialized AI models for specific business purposes — two initiatives that will put it in direct competition with the frontier AI labs it already supplies. And OpenClaw is just one front in an increasingly diverse competitive landscape that includes everything from quantum computing to videogames. It’s no wonder Huang says he’s busier than ever. Living within his triangle is hard work. |

|

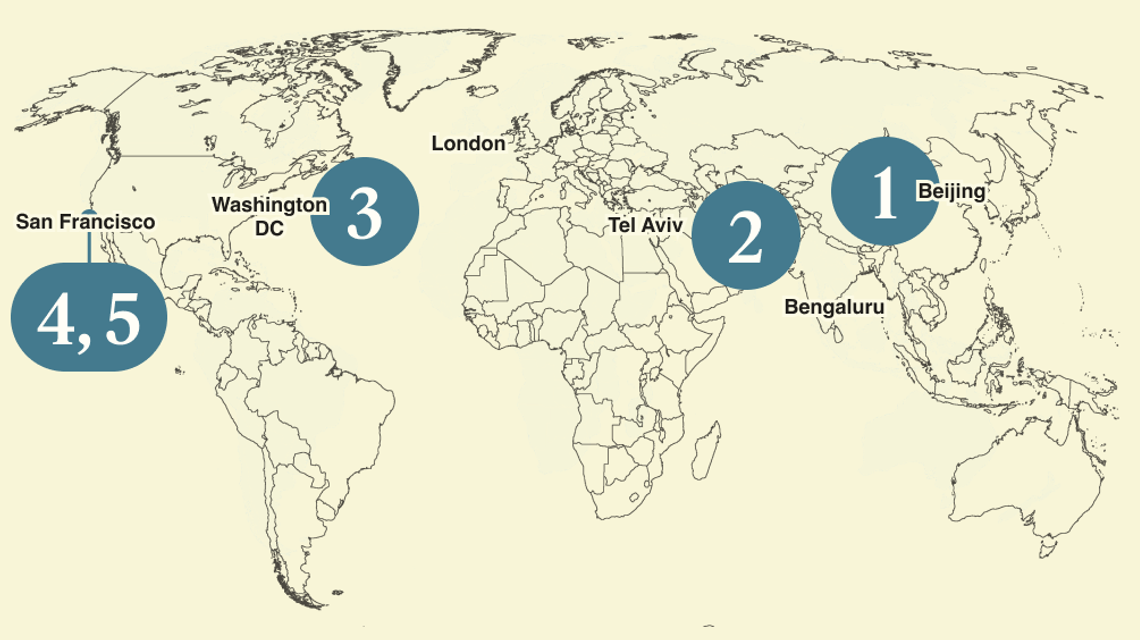

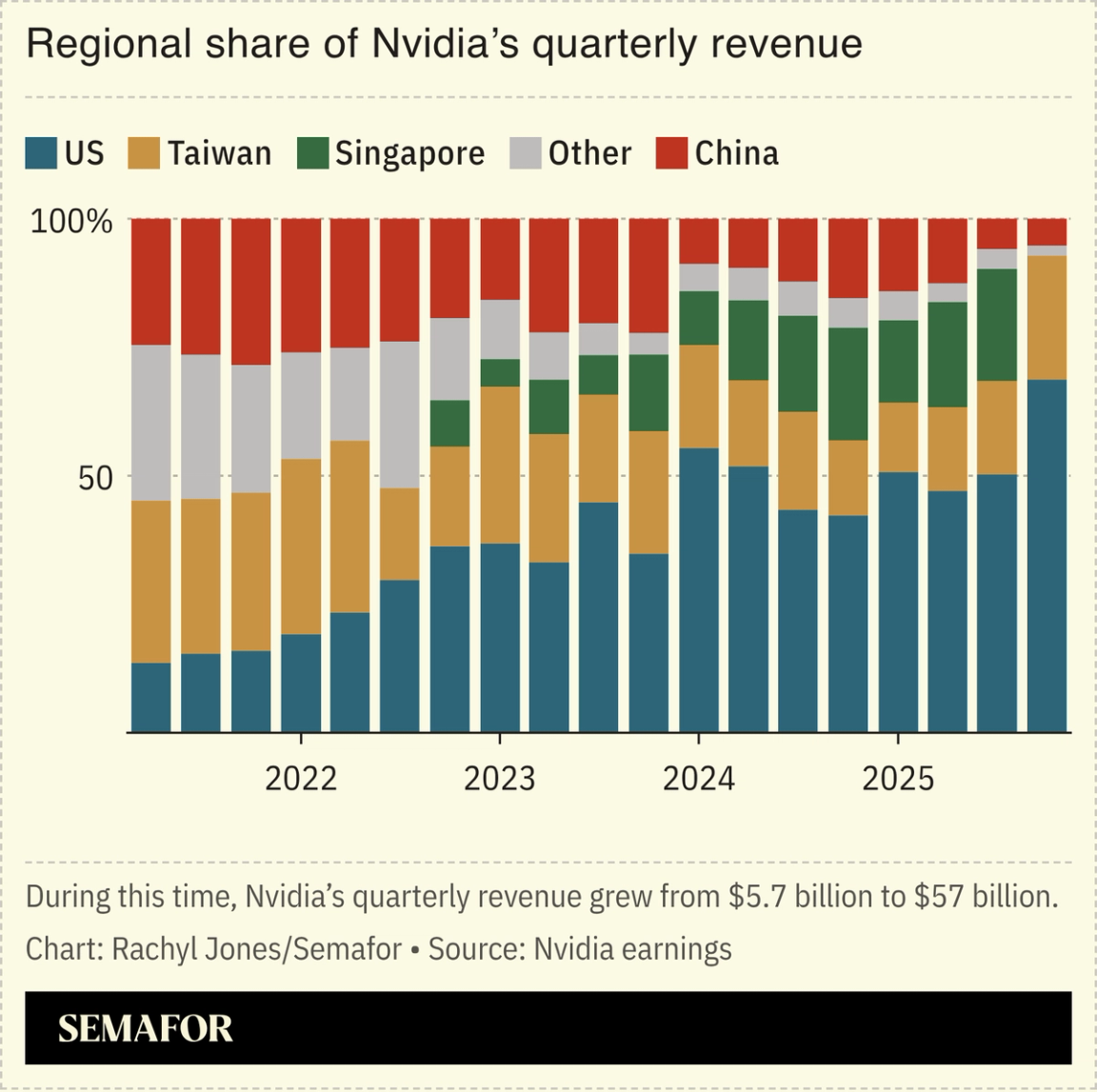

Nvidia restarts H200 chip production |

Tucked inside the hours of Huang’s remarks was news that Nvidia is restarting manufacturing of its H200 processors, weeks after the company reportedly halted production of the powerful chips intended for the Chinese market. Huang said Nvidia had been licensed “for many customers in China for H200s,” acknowledging the change in outlook. Washington had greenlit sales of the China-bound chips in December, but Beijing restricted Chinese companies from buying them amid its push to support homegrown champions. Huang highlighted how Nvidia — and just about every US company that does big business in China — is trying to balance competing pressures: Maintaining global market share, pleasing the US president, and complying with export controls. Huang said that while President Donald Trump aimed to keep the US in a leadership position, “he would like us to compete worldwide and not concede those markets unnecessarily.” |

|

Humans likely to blame in Iran school strike |

People and rescue forces work following a reported strike on a school in Minab, Iran. Abbas Zakeri/Mehr News/WANA via Reuters. People and rescue forces work following a reported strike on a school in Minab, Iran. Abbas Zakeri/Mehr News/WANA via Reuters.In the days after the deadly US missile strike on an Iranian elementary school, one prime suspect emerged: artificial intelligence. Critics were eager to pin the more than 150 deaths on AI after reports on the use of Claude in the capture of Venezuela’s Nicolás Maduro — and a public spat between the Pentagon and Anthropic — kicked off a global debate over the growing role of the technology in warfighting, Semafor’s Reed Albergotti writes. The reality, according to former military officials and people familiar with aspects of the bombing campaign in Iran, is that humans — specifically the thousands of people who gather intelligence and analyze satellite photos to build massive target lists ahead of potential conflicts with foreign adversaries — are to blame for the deaths at Shajareh Tayyebeh Elementary School, which shadows the US and Israeli war on Iran. The error was one that AI would not be likely to make: US officials failed to recognize subtle changes in satellite imagery, while human intelligence analysts missed publicly available information about a school located inside the Revolutionary Guard compound (or failed to add it to the database used for targeting). AI has its notorious failings, from hallucinations to sycophancy, but it’s also able to take in far more information than current, human-led systems — and a deeper look at satellite imagery or, simply, an internet search could have forestalled the disaster. Even a scan of Iranian business listings turned up the school, according to Reuters. “Finding the right balance between humans and machines will be a crucial component of future training,” said Jack Shanahan, a senior fellow at the Center for New American Security and a 36-year veteran of the US military, where he served as the founding director of Project Maven, the Pentagon’s AI program. |

|

Defense tech is the latest risk-capital parking spot |

Joshua Roberts/File Photo/Reuters Joshua Roberts/File Photo/ReutersThe US’ military-industrial complex is quickly morphing into a military-industrial-financial complex, in which risk capital is flowing toward increasingly specialized defense bets, Semafor’s Liz Hoffman argued. The shift is spurred by “political expedience, genuine patriotism, the whiff of Pentagon profits, and a belief in Silicon Valley that they can disrupt this, too,” Liz wrote. US robotics startup Gecko on Tuesday announced a $71 million deal with the Navy to speed up ship repairs, and the Pentagon is hunting for investment bankers to strike more defense deals. AI adds another layer: The Pentagon is now reportedly looking for alternatives to Anthropic’s tools after their disagreement about the military’s use of its AI model. |

|

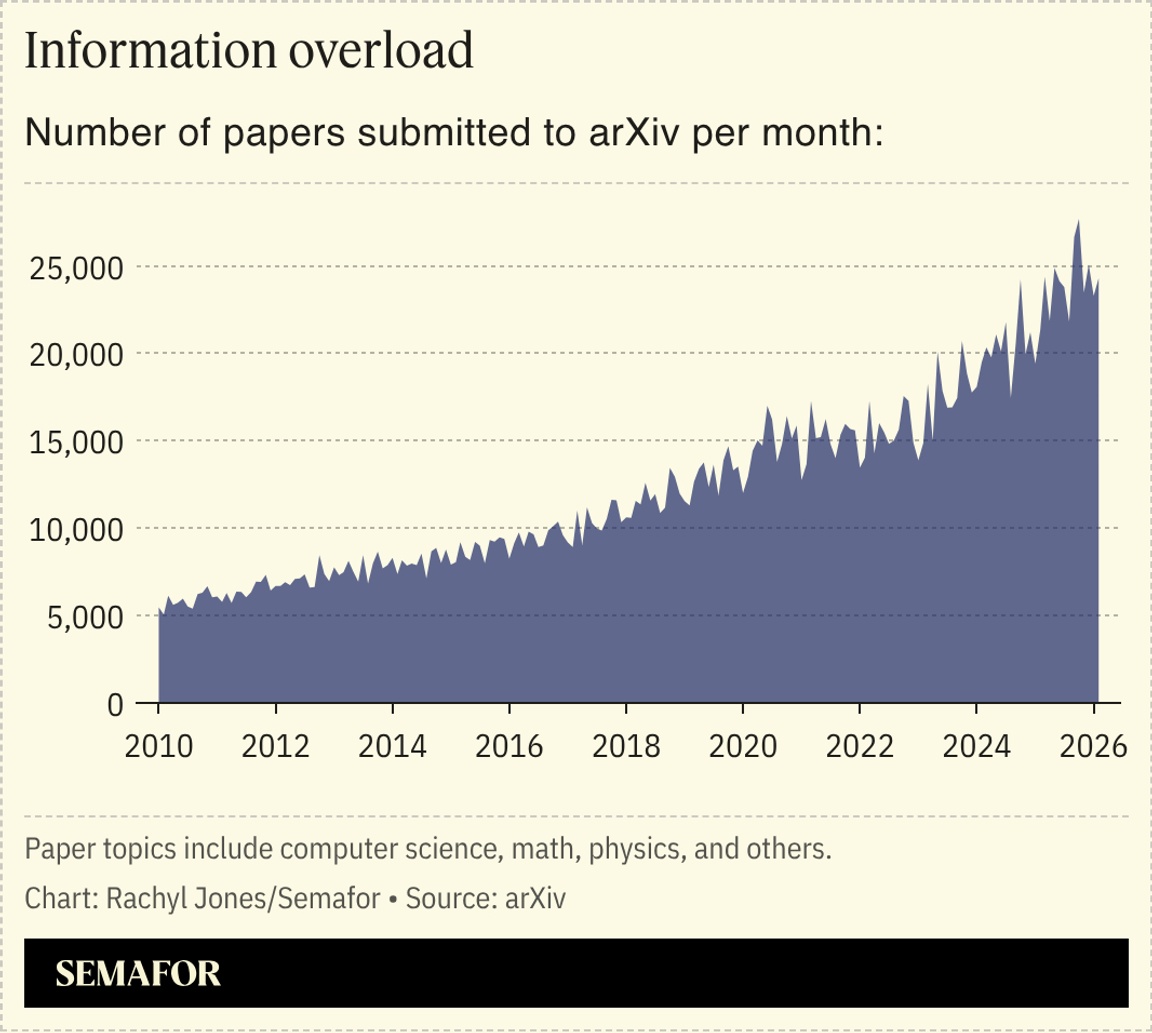

Autonomous labs seek to solve human overload |

As humans struggle to keep up with the tens of thousands of new scientific research papers that are now submitted to archives and journals each year, one startup says we should quit while we’re behind. Autoscience, part of the wave of so-called autonomous labs that have cropped up using AI to improve AI, has developed a system of agents that read publicly available research papers, plug into companies’ machine learning tools, and run experiments to improve models. “Reading 10 papers a week is very hard. AI systems can read 17,000 and figure out which ones were relevant for your specific modeling problem,” Autoscience CEO Eliot Cowan told Semafor. We’ve already passed the threshold of having too many papers for humans to parse through — an issue researchers already need AI to solve, he said. The company, which just raised $14 million in seed funding from General Catalyst, Toyota Ventures, and Perplexity Fund, is among a growing number of self-driving labs that are drawing investor attention for their ability to use technologies that reason on new hypotheses. OpenAI began experimenting with the technology, and a Singapore-based lab running pharmaceutical and biotechnology experiments raised $45 million in December. — Rachyl Jones |

|

Tech CEOs need to leave their ‘God complex’ behind |

Should Silicon Valley’s push into defense come with a kill switch? David Ulevitch, the partner who runs a16z’s America-first fund, the American Dynamism Fund, says no: If tech companies want to do business with the government, they have to trust it. He said Anthropic’s rift with the Pentagon won’t be a “company-killing event” but a “sorting algorithm” for employees in AI. “I don’t want to be the person they call and say, can we hit the enter button on this command or not,” Ulevitch said in the latest episode of Semafor’s Compound Interest show. “But I also don’t have a God complex.” The same goes for voters, who will decide how AI is used in their communities: Ulevitch is on the board of Flock Safety, the camera company whose partnership with Amazon’s Ring doorbells was recently scrapped after a Super Bowl ad that spurred panopticon-anxious backlash. Listen to Compound Interest for Ulevitch’s view on whether venture, with its software and capital-light roots, has a right to play in heavy, hardware-focused industries like space and energy. → |

|

|

Semafor today announced a new slate of CEOs and global leaders joining more than 450 top executives at the 2026 Annual Convening of the Semafor World Economy, taking place April 13–17 in Washington, DC, along with the conference’s agenda. As the definitive live journalism platform on the new economy, the convening will bring together US Cabinet secretaries, central bank governors, finance ministers, and Fortune 500 CEOs for five days of onstage conversations and in-depth interviews uniting private and public sector leaders to exchange ideas that will shape the future of the world economy. |

|

Actors on stage after a performance of Phantom of the Opera in 2021. Caitlin Ochs/Reuters. Actors on stage after a performance of Phantom of the Opera in 2021. Caitlin Ochs/Reuters.An AI data company is seeking “actors, improvisers, and performers” to help train large language models to understand human tone and emotional expression. The rise of sophisticated AI has created a sub-industry of specialist data providers, who impart niche or specialist information. AI’s capabilities are “jagged,” The Verge noted, meaning they are great at some complex tasks and rubbish at seemingly easier ones, often because those tasks lack sufficient interpretable, labeled data. One such task is reading human emotions: The growth of AI voice chat means believable emotional expression is increasingly important. The data company, Handshake, has seen a threefold increase in demand since last summer, and has a network of white-collar professionals from chemists to screenwriters providing data. |

|

|