|

|

Last night, I cautioned that there was a lot going on and that we would be playing catch-up today. There is so much that I’m trying to highlight some of the most significant ones in standalone pieces to make them digestible. Earlier today, we took up DOJ’s release of information related to the now-dismissed classified documents case that can’t have made Donald Trump happy. Here’s today’s second installment.

“The United States of America will never allow a radical left, woke company to dictate how our great military fights and wins wars,” Donald Trump posted on social media after Anthropic, maker of the AI model Claude, pushed back when the Department of Defense wanted to cross ethical red lines Anthropic had set for the use of its product. Anthropic opposed the use of its model for fully autonomous weapons or domestic mass surveillance.

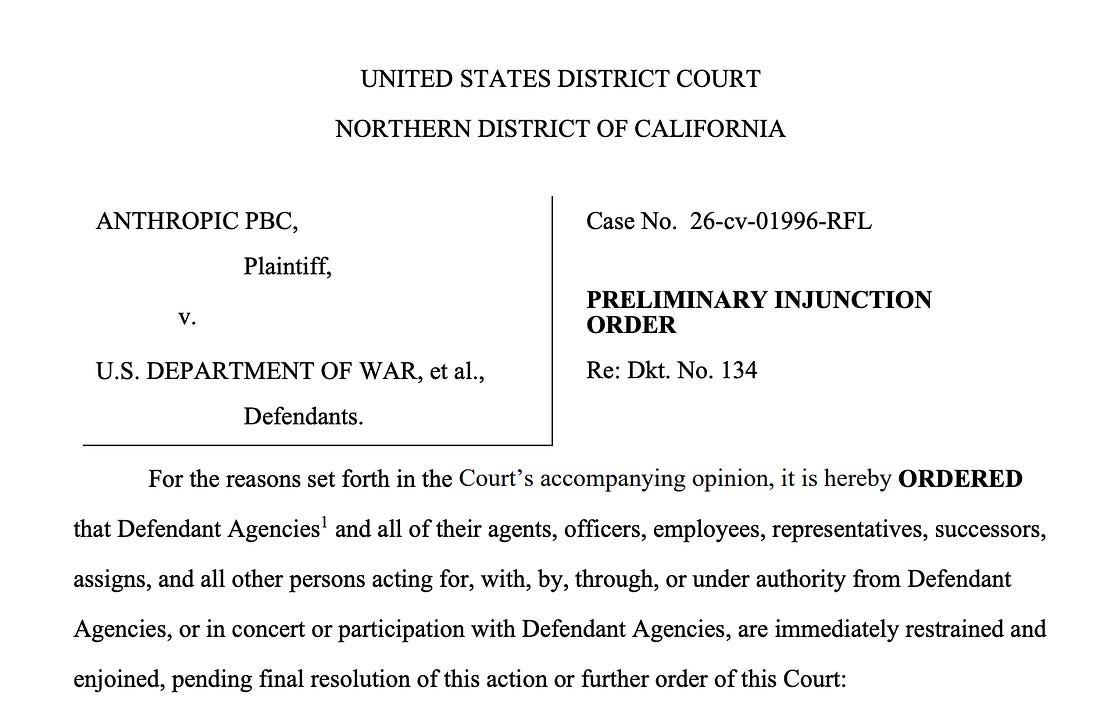

The Trump administration responded by designating Anthropic a “supply chain risk,” a step that compromised not only Anthropic’s work with the government but also its work with virtually any other entity that wanted to do business with the Pentagon. Trump ordered all U.S. agencies to stop using the company’s products. Anthropic was essentially blacklisted. So they sued. We discussed that when it happened. Tonight, Judge Rita F. Lin in the Northern District of California entered the preliminary injunction Anthropic requested, blocking the Pentagon’s order.

She wrote: “Nothing in the governing statute supports the Orwellian notion that an American company may be branded a potential adversary and saboteur of the U.S. for expressing disagreement with the government.”

The dispute is not about whether the government can decide to replace Anthropic with another, more compliant AI partner. It can, and Judge Lin explicitly said so. Before the Department of Defense took the retaliatory step of designating it a supply chain risk, Anthropic also said so, and offered to help the government transition to a relationship with another company. The significance of Judge Lin’s order is that it prohibits the administration from taking retaliatory steps against the company for refusing to violate its own red lines.

The government could try to appeal this decision, or simply move on and separate from Anthropic. And there is still a parallel lawsuit before the Court of Appeals in Washington, D.C., that hasn’t been decided. So the case is far from over, but at least for now, another federal judge has rejected the administration’s efforts to come down hard on anyone who opposes it. Judge Lin ruled that the government can’t punish Anthropic for refusing to cross the ethical boundaries it has set for itself.

Anthropic was founded by former OpenAI executives. They explain their mission like this: “Every day, we make critical decisions that inform our ability to achieve our mission. Shaping the future of AI and, in turn, the future of our world is a responsibility and a privilege. Our values guide how we work together, the decisions we make, and ultimately how we show up for each other and work toward our mission.” Anthropic is the first U.S. company to receive the supply chain risk designation. Before this, it has been reserved for foreign companies that pose…an actual risk to the supply chain.

In a hearing earlier this week, the parties hashed out some of the issues in court before Judge Lin. She commented on the unusual designation of an American company, saying that the provision was typically directed at foreign terrorists and other hostile actors. While DoD was free to stop using Claude, in her view, it looked like the government was punishing Anthropic for publicizing its dispute with the government over how Claude could be used. She seemed inclined to believe that Anthropic had the right to discuss its dispute with the government publicly, and that trying to prevent it from doing so violated the First Amendment.

Her order prohibits the government from “implementing, applying, or enforcing” the February 27, 2026, Presidential Directive ordering all federal agencies to stop using Anthropic’s technology. It has various additional provisions designed to prevent agencies from acting based on the directive and to prevent the government from looking for ways to have government employees or contractors implement it. The government is also banned from “implementing, applying or enforcing in any manner” Hegseth’s designation of Anthropic as a supply chain risk. The Judge gave the Trump administration until April 6 to file a status report describing the steps it has taken to ensure the government is complying with the court’s order. She is also requiring a certificate of compliance with the order’s requirement. Although this case isn’t over, it’s headed in the right direction.

If you read Civil Discourse because you want to understand what the headlines actually mean—and not just react to them—paid subscribers make that level of analysis possible. For the price of a couple cups of coffee each month, you get the perspective of someone who has spent decades inside the legal system explaining how the pieces really fit together.

We’re in this together,

Joyce

You're currently a free subscriber to Civil Discourse with Joyce Vance . For the full experience,