|

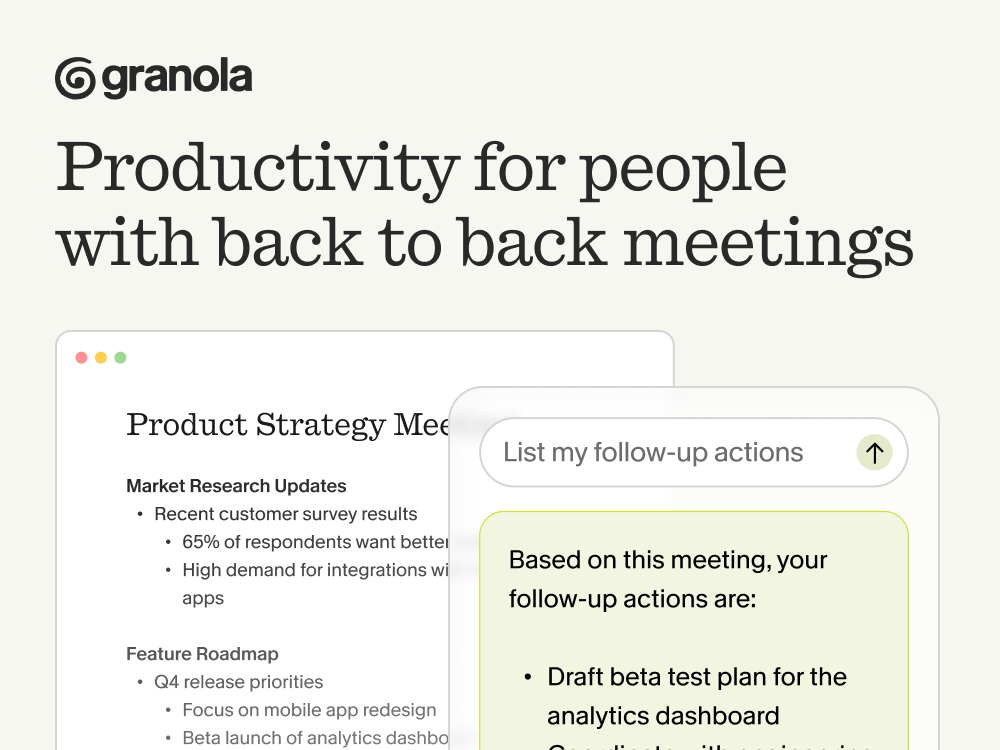

This issue is powered by Granola, the app that might actually make you love meetings.

With Granola, the AI Notepad for people with back-to-back meetings, you can avoid context switching, the cognitive load of remembering what you promised … and the stress of knowing something important slipped through.

Free 1 month with the code SCOOP

Enterprise cybersecurity is entering a new phase, one where AI copilots are no longer experimental features but core operational tooling.

Platforms like Microsoft Security Copilot and the expanding AI capabilities across the security platforms of Palo Alto Networks are rapidly automating tasks that historically consumed analysts’ time: alert triage, threat correlation, investigation summarization, and even remediation guidance. For Security Operations Centers (SOCs), this is a major shift. AI promises to collapse investigation time, reduce alert fatigue, and surface attack patterns that humans might miss.

But as many SOC teams are starting to realize, deploying AI in security workflows introduces a new challenge that isn’t purely technical—it’s cognitive.

When an AI copilot recommends isolating a host, flagging a lateral movement pattern, or escalating a potential credential compromise, the analyst staring at that dashboard has to decide in seconds whether to trust the system. If the AI behaves like a black box, two failure modes immediately emerge: analysts may either over-trust the recommendation and accept it blindly, or under-trust it and ignore valuable signals.

In a high-stakes environment where minutes matter, both outcomes are dangerous.

This is precisely where human-centered explainability becomes critical. The emerging insight from recent research is that explainability in cybersecurity is less about the internals of the model and more about how the reasoning is surfaced through the interface the analyst actually uses.

Explainability Is a UI Problem

Traditional explainable AI research has largely focused on the model layer. Researchers have spent years developing techniques like feature attribution, saliency maps, attention visualization, and other ways to interpret what happens inside neural networks.

Those methods are valuable for model auditing, but they rarely map cleanly to real operational workflows.

SOC analysts are not inspecting gradients or feature contributions while triaging an alert queue. Their mental model is closer to investigative reasoning: What happened? Why does the system think it’s malicious? What would change the conclusion?

The gap between technical explainability and operational usability is where many AI systems fail to gain traction. An algorithm might technically expose its reasoning, but if the interface presents that explanation poorly—or overloads the analyst with irrelevant details—the result is still confusion.

In practice, explainability succeeds or fails at the user interface layer.

Four Explanation Strategies That Shape Analyst Decision-Making

To explore how explanation design affects security workflows, researchers prototyped four explanation strategies within a SIEM-style dashboard. Each one represents a different philosophy for communicating AI reasoning.

Confidence Visualization

The simplest approach is to expose probability estimates or risk scores. These appear as visual indicators—color gradients, confidence bars, or probability percentages—that communicate how strongly the AI believes an alert is malicious.

This approach is extremely efficient. Analysts can scan dozens of alerts quickly and prioritize the highest-confidence threats.

The drawback is that confidence alone doesn’t answer the question analysts instinctively ask: why?

A confidence score without context risks being interpreted as a black-box assertion.

Natural Language Rationale

The second approach attempts to bridge that gap with structured natural-language explanations. These resemble the short investigative summaries many analysts already write manually.

For example:

“This alert is likely malicious due to abnormal lateral movement activity originating from a compromised workstation and matching known credential-dumping patterns.”

These explanations are typically generated through templated reasoning structures—observation, inference, and conclusion.

Natural language explanations dramatically improve readability, particularly for junior analysts or teams under time pressure. However, they introduce a subtle risk: language can sound authoritative even when the underlying reasoning is uncertain. If phrased confidently, these explanations may inadvertently encourage over-trust.

Counterfactual Explanations

A more advanced approach introduces counterfactual reasoning: allowing analysts to explore how the model’s decision would change if certain inputs changed.

For instance:

“If the source IP reputation score were neutral rather than malicious, the alert risk would drop from 78% to 42%.”

Interactive controls—sliders, toggles, or expandable panels—allow users to manipulate variables and observe how the risk assessment shifts.

This technique aligns strongly with how engineers and analysts think. Instead of being handed an answer, they can test the system’s logic directly. The trade-off is cognitive load; interacting with counterfactual explanations requires more time and attention.

Hybrid Explanations

The most effective design combines these approaches using progressive disclosure. Analysts first see a quick confidence signal, then optionally expand the interface to reveal natural language explanations and counterfactual exploration tools.

This layered model mirrors how investigations actually unfold: quick triage first, deeper reasoning only when necessary.

What the Data Reveals About Analyst Behavior

In controlled experiments involving both experienced analysts and trained participants, explanation