|

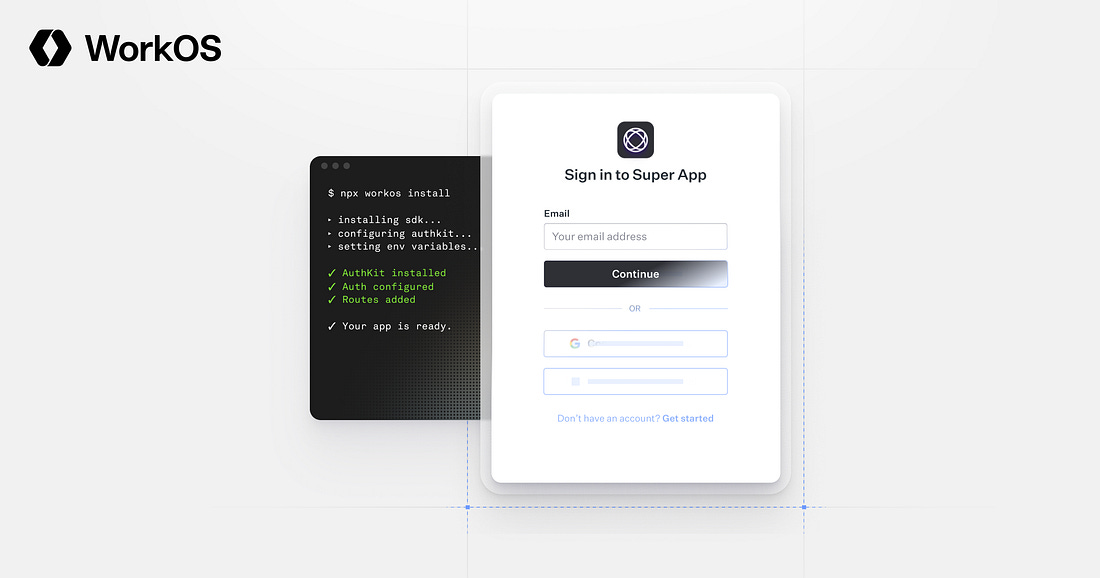

npx workos: From Auth Integration to Environment Management, Zero ClickOps (Sponsored)

npx workos@latest launches an AI agent, powered by Claude, that reads your project, detects your framework, and writes a complete auth integration into your codebase. No signup required. It creates an environment, populates your keys, and you claim your account later when you're ready.

But the CLI goes way beyond installation. WorkOS Skills make your coding agent a WorkOS expert. workos seed defines your environment as code. workos doctor finds and fixes misconfigurations. And once you're authenticated, your agent can manage users, orgs, and environments directly from the terminal. No more ClickOps.

GitHub built an AI agent that can fix documentation, write tests, and refactor code while you sleep. Then they designed their entire security architecture around the assumption that this agent might try to steal your API keys, spam your repository with garbage, and leak your secrets to the internet.

This can be considered paranoia, but it’s the only responsible way to put a non-deterministic system inside your CI/CD pipeline.

GitHub Agentic Workflows let you plug AI agents into GitHub Actions so they can triage issues, generate pull requests, and handle routine maintenance without human supervision. The appeal is clear, but so is the risk. These agents consume untrusted inputs, make decisions at runtime, and can be manipulated through prompt injection, where carefully crafted text tricks the agent into doing things it wasn’t supposed to do.

In this article, we will look at how GitHub built a security architecture that assumes the agent is already compromised. However, to understand their solution, you first need to understand why the problem is harder than it looks.

Disclaimer: This post is based on publicly shared details from the GitHub Engineering Team. Please comment if you notice any inaccuracies.

Why Agents Break the CI/CD Contract

CI/CD pipelines are built on a simple assumption. The developers define the steps, the system runs them, and every execution is predictable. All the components in a pipeline share a single trust domain, meaning they can all see the same secrets, access the same files, and talk to the same network. That shared environment is actually a feature for traditional automation. When every component is a deterministic script, sharing a trust domain makes everything composable and fast.

Agents break that assumption completely because they don’t follow a fixed script. They reason over repository state, consume inputs they weren’t specifically designed for, and make decisions at runtime. A traditional CI step either does exactly what you coded it to do or fails. An agent might do something you never anticipated, especially if it processes an input designed to manipulate it.

GitHub’s threat model for Agentic Workflows is blunt.

They assume the agent will try to read and write state that it shouldn’t, communicate over unintended channels, and abuse legitimate channels to perform unwanted actions. For example, a prompt-injected agent with access to shell commands can read configuration files, SSH keys, and Linux /proc state to discover credentials. It can scan workflow logs for tokens. Once it has those secrets, it can encode them into a public-facing GitHub object like an issue comment or pull request for an attacker to retrieve later. The agent isn’t actively malicious, but following instructions that it couldn’t distinguish between legitimate ones.

In a standard GitHub Actions setup, everything runs in the same trust domain on top of a runner virtual machine. A rogue agent could interfere with MCP servers (the tools that extend what an agent can do), access authentication secrets stored in environment variables, and make network requests to arbitrary hosts. A single compromised component gets access to everything. The problem isn’t that Actions are insecure. It’s that agents change the assumptions that made a shared trust domain safe in the first place.

[Live on May 6] Stop babysitting your agents (Sponsored)

Agents can generate code. Getting it right for your system, team conventions, and past decisions is the hard part. You end up babysitting the agent and watch the token costs climb.

More MCPs, rules, and bigger context windows give agents access to information, but not understanding. The teams pulling ahead have a context engine to give agents only what they need for the task at hand.

Our April webinar filled up, so we are bringing it back! Join us live (FREE) on May 6 to see:

Where teams get stuck on the AI maturity curve and why common fixes fall short

How a context engine solves for quality, efficiency, and cost

Live demo: the same coding task with and without a context engine

Three Layers of Distrust

GitHub Agentic Workflows use a layered security architecture with three distinct levels.